background

The Mitigation Planning Portal (MPP) is a web application developed by FEMA to track and report hazard mitigation plans and related data elements across all 10 regions. Users can create mitigation plans, manage compliance, and oversee funding across a national network of states and local jurisdictions.

"Consolidated space of record for mitigation plan status."

— Stakeholder workshop, FEMA

Understanding the Users

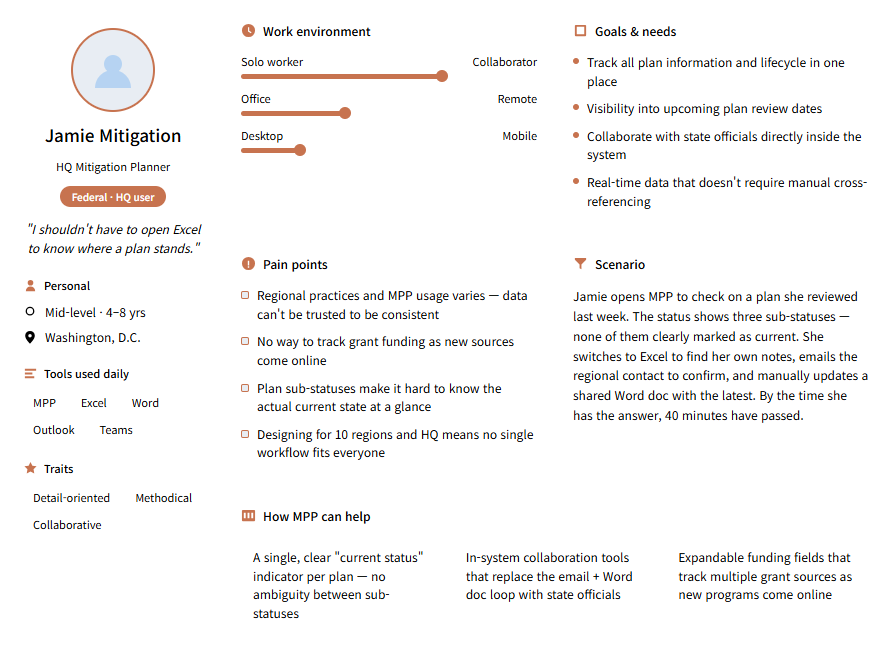

MPP comprises a wide range of FEMA users, each with varying levels of administrative access. Users are organized into three primary groups: Federal, Regional, and State, each with a unique set of access.

To cover the complexity and design process in greater depth, this case study focuses on federal users.

The design work centered on the Federal Read & Write user — the role with the broadest scope and the most friction in the existing system. Meet Sandra:

Pain Points

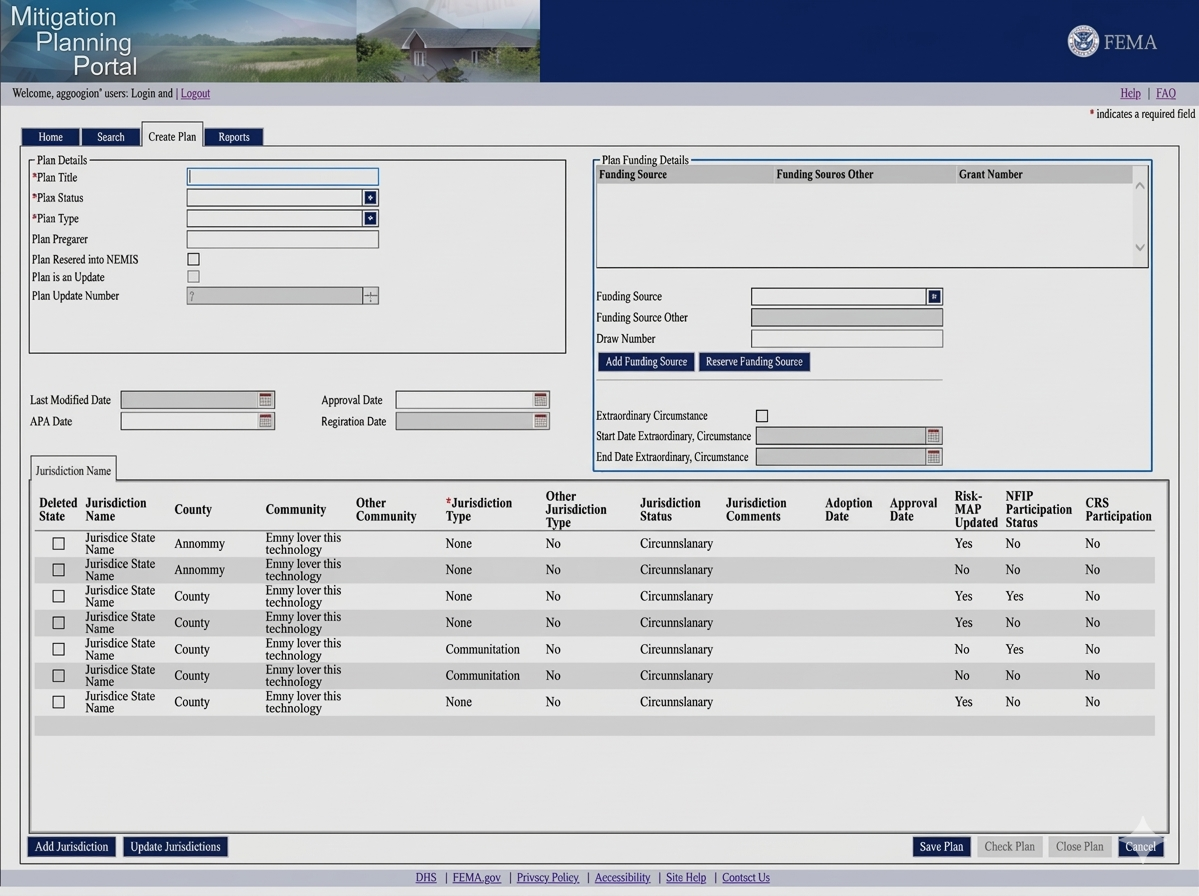

I learned from the stakeholder workshops that several user pain points were overlooked during the transition from the Legacy version to MPP 1.0, and the updates were completed without resolving many of the problems in the legacy version.

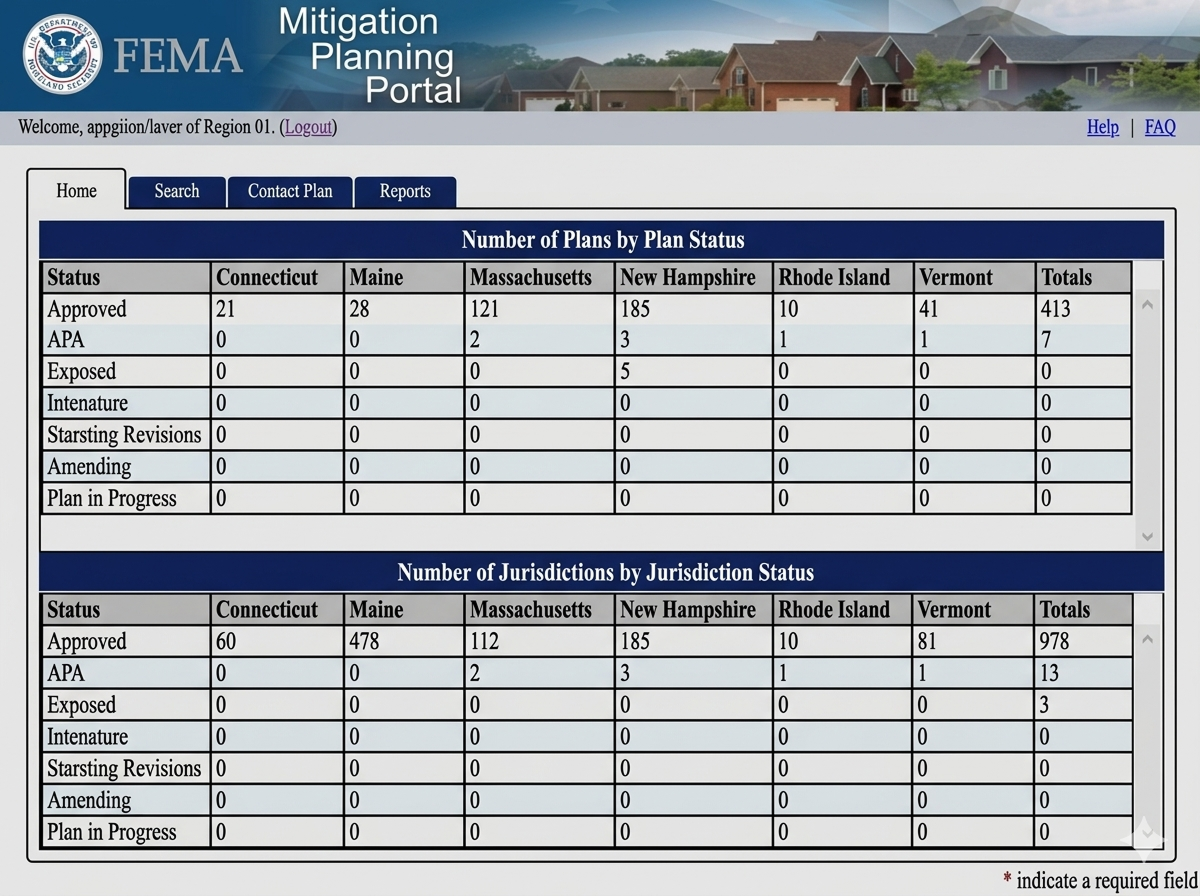

Broken User Flow

The status dashboard provided a high-level view of plan status, but did not offer a direct path to the plans they were working on. Users had to navigate via search to resume their work.

Confusing review process

The current design lacks a reliable way to log their activity or leave comments tied to the plan they are working on.

Can't easily track updates

Users can either receive all notifications for a plan or none at all. For those working with 100 or more, it becomes overwhelming, making it nearly impossible to prioritize.

"Manually entering Tribal and entering can be tedious."

— Stakeholder workshop, FEMA

Legacy designs screenshots

How can we improve?

- How might we allow users quickly resume plans they were previously working on?

- What would a "saved" or "flagged" state look like in this context?

How can we improve?

- How might we reorganize the information displayed on the screen to reduce cognitive load?

- What level of detail does an analyst actually need at first glance vs. on demand?

- How should users move between plan sections without losing their place?

Ideation

Building and refining ideas

After reviewing both prior versions of MPP, the client and I iterated on design directions to align with user needs and business goals. The focus was on connecting the missing dots, simplifying every step of the process, and making information transparent at every stage.

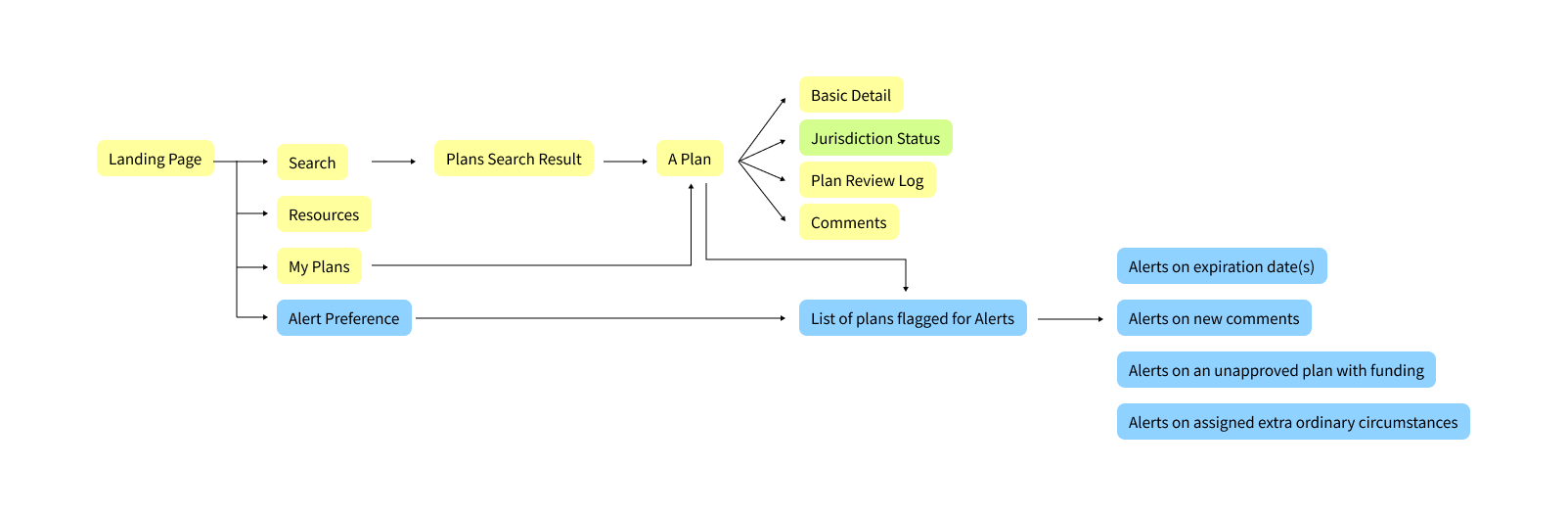

Mapping out the plan management process

- From Landing Page to Plan Detail — tracing how users navigate to and through individual plans

- Clustered plan details are reorganized into a simplified, focused interface

- Alert Preferences were introduced to help users follow the plans that need extra attention

Features carried over from Legacy MPP are indicated in yellow. New features added for MPP 2.0 are in blue. Green indicates my design suggestion but not included in the delivered version, but documented here as part of this case study.

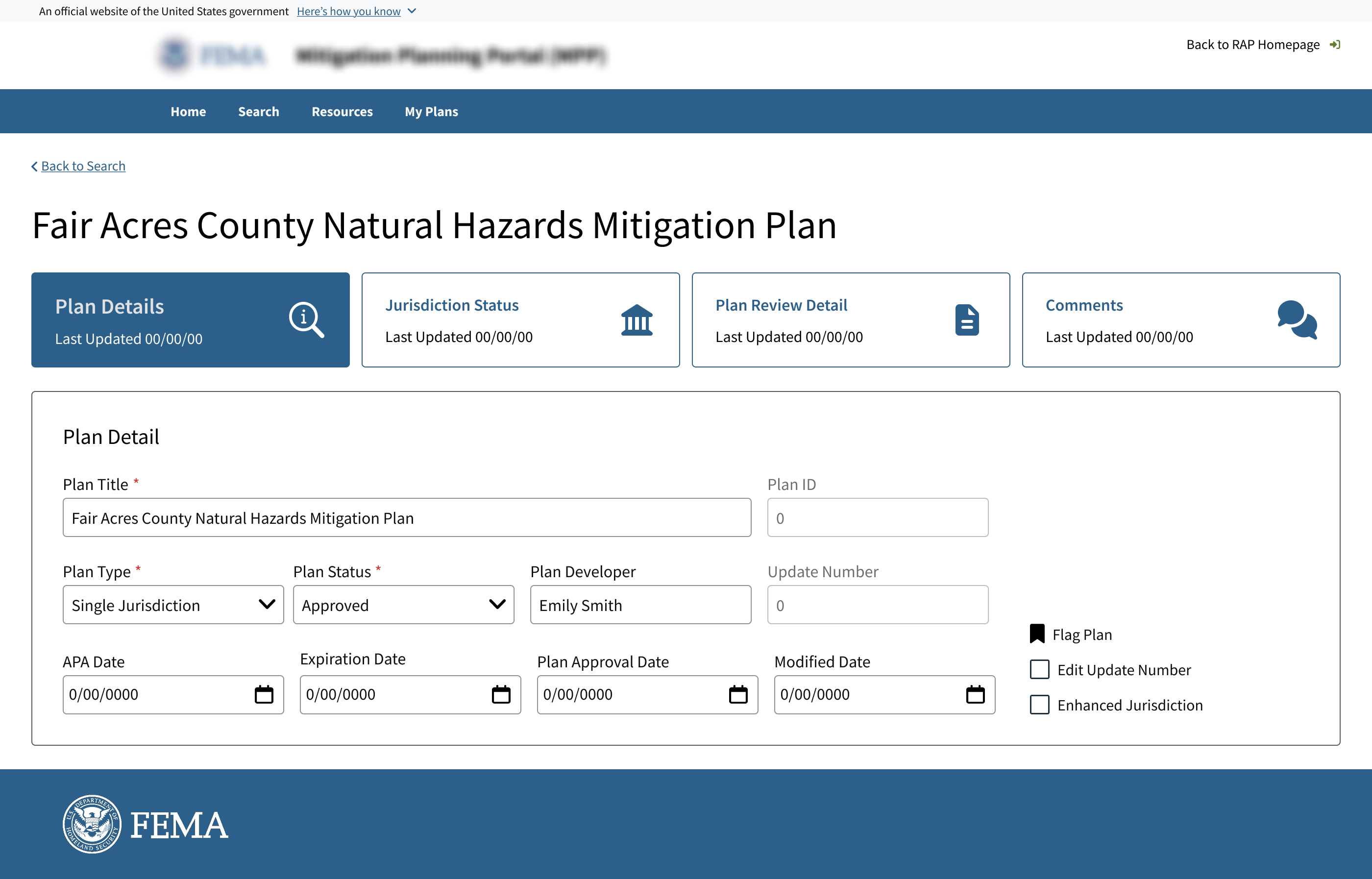

Final Designs

The client didn’t want to deviate too much from the original design. I didn’t want this to be a “make it look pretty” type of work, so I pushed for a bigger challenge and explained how the new design proposal can benefit users’ experience.

Although some were accepted, the number of design updates was rejected due to time constraints and conflicts with existing dev enviorment. For this case study, I am sharing the design I initially proposed.

Flag Plans — Introduced a feature that lets users mark any plan and return to it directly from the dashboard. Accessible from a dedicated My Plans tab, the saved plan list eliminates the need to reconstruct a search for work already in progress.

Jurisdiction Status tab — Separated the Jurisdiction Status table into its own dedicated tab, giving the data room to breathe. Framed to the client around cognitive load: sectioning content helps users process information faster and reduces the likelihood of input errors.

My Plans tab + Flag Plans feature

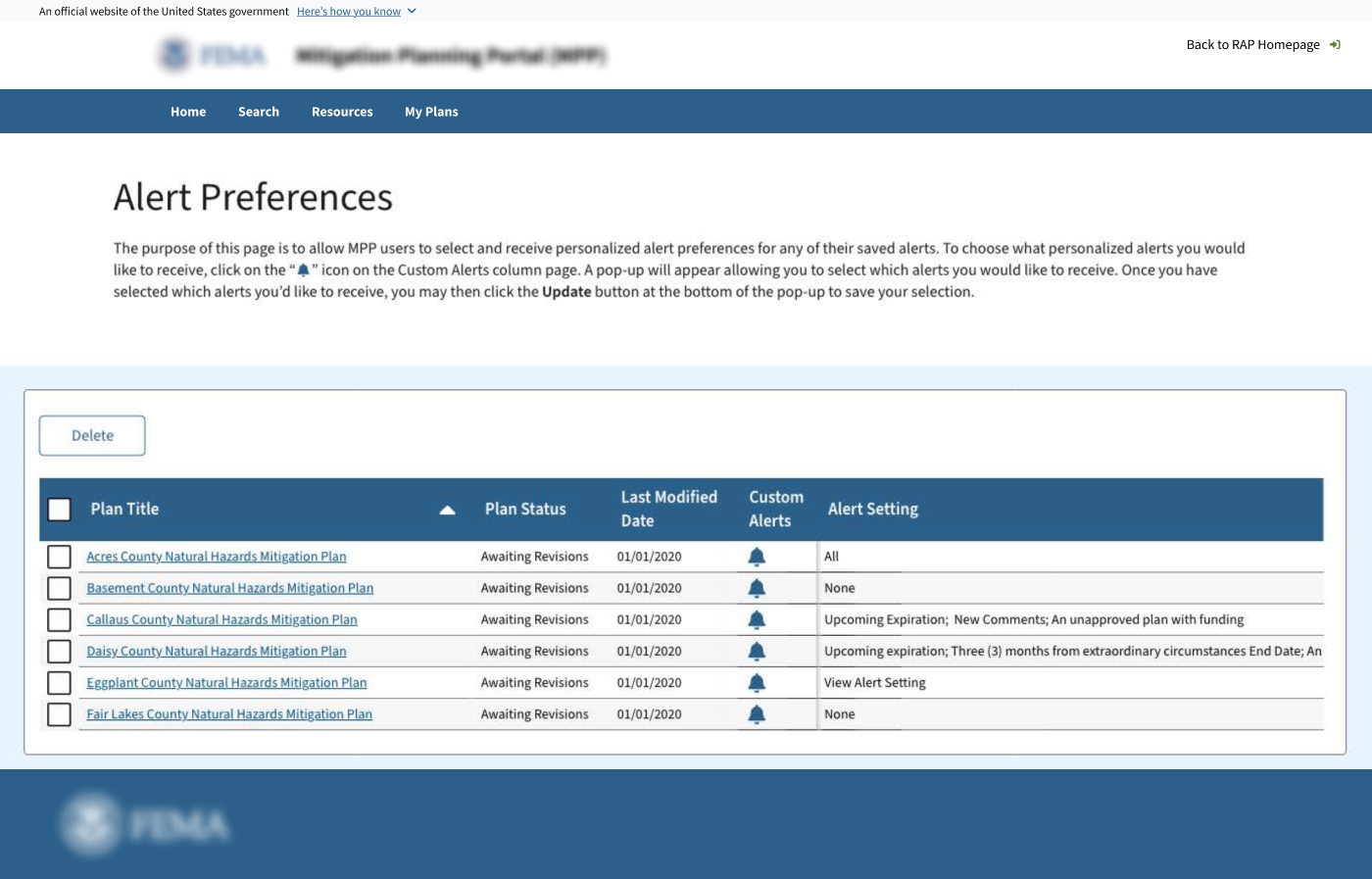

Alert Preferences

The client-approved alert system used a modal pop-up triggered by a bell icon within a dense data table. Each time a planner configured alerts for a plan, they lost their place in the list, disrupting context instead of supporting it.

In government applications, modals are typically associated with errors, confirmations, or warnings. Using a modal for settings suggests urgency when users are simply configuring preferences.

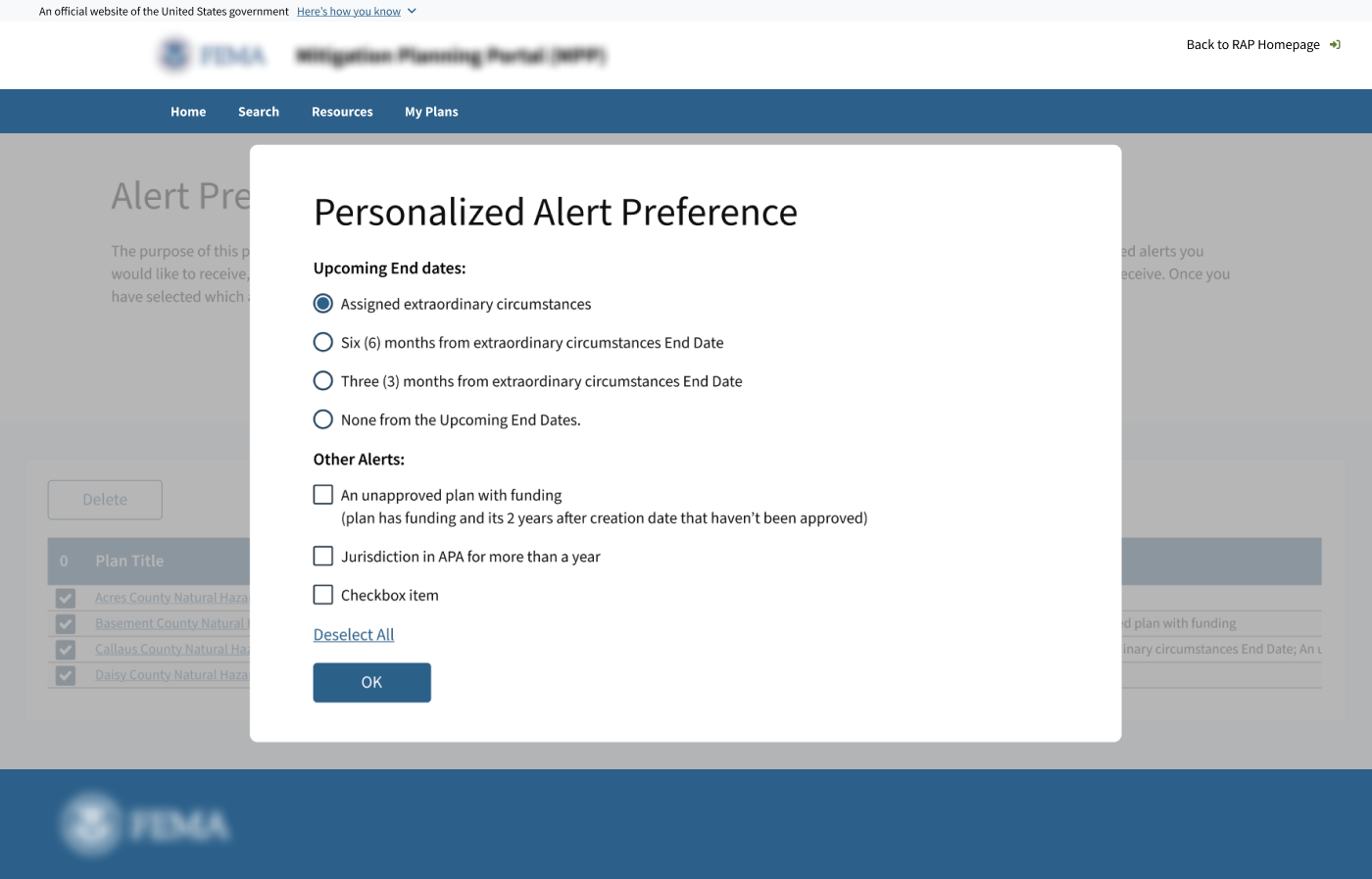

The split-panel approach

The redesigned Alert Preferences page uses a split-panel layout, with a card list of saved plans on the left and an inline settings panel on the right. Selecting a plan opens its alert configuration without leaving or interrupting the list view.

Each plan card displays a summary of active alerts, such as status changes, expiration windows, and review activity, allowing planners to quickly identify which plans have alerts configured. Plans without alerts are visually dimmed.

Expiration alerts use radio buttons — selecting a time window is a mutually exclusive choice, and presenting options as a card-style group makes that logic visible. Status and comment alerts use toggles — they are independent, binary, and the toggle communicates that directly.

What Success Looked Like Without Metrics

This project did not include a formal usability study or post-launch analytics access. That is the reality of government UX work: baseline data rarely exists, and access to usage analytics is limited. Success was measured by other means.

"Because this project lacked baseline analytics, design decisions were grounded in direct stakeholder workshop findings and validated through client review cycles."

What I'd Do Differently

This project reinforced something I keep relearning: in enterprise and government UX, the hardest design problems aren't on the screen. They're in the room — in competing stakeholder priorities, unclear mandates, and decisions that take months because no one owns them. Knowing when to advocate, when to reframe, and when to accept a direction and ship is as much the work as the artifact itself.